9·4 months ago

9·4 months agoI think I know what the issue is.

2·4 months ago

2·4 months agoThey probably mean they don’t provide support via the official channels due to the support being experimental, instead you’d report bugs directly to the kdeconnect-mac repo.

8·4 months ago

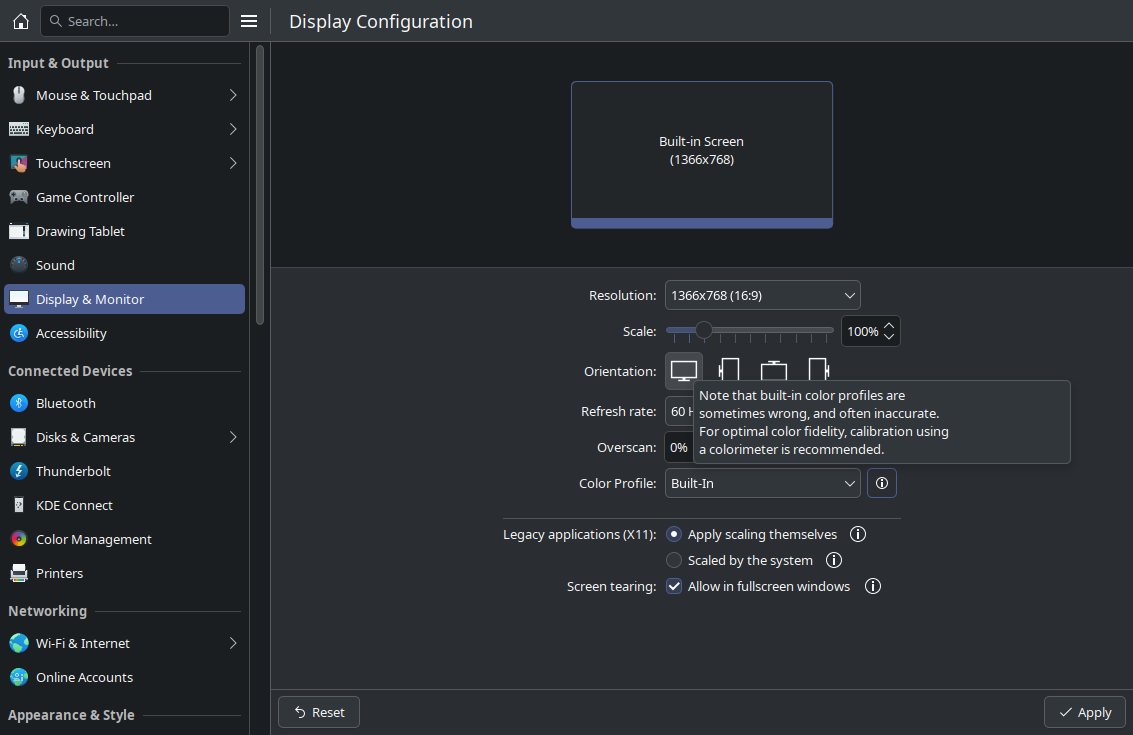

8·4 months agoYour colors are inverted.

The accessibility icon never follows accent color, and firewall is always red not blue. Something is swapping your colors around.

Maybe color correction for the color blind?Blue -> Yellow

Red -> Blue

4·5 months ago

4·5 months agoOfc it’s prone to bullshitting, it can’t even stay consistent; shit will contradict itself and sight the same sources.

9·5 months ago

9·5 months agoWhy do you think trump started peddling Jesus bullshit last time he ran even though he’s not even remotely cristian or religious. He’ll shill anything as long as he thinks it’ll profit him.

9·5 months ago

9·5 months agoThe fact people bought NFTs just proves that crypto bros just buy into hype without understanding the technology.

I knew NFTs were bullshit from the start because I actually took the time to understand how they “worked”.

34·5 months ago

34·5 months agoThat goes for both…

4·5 months ago

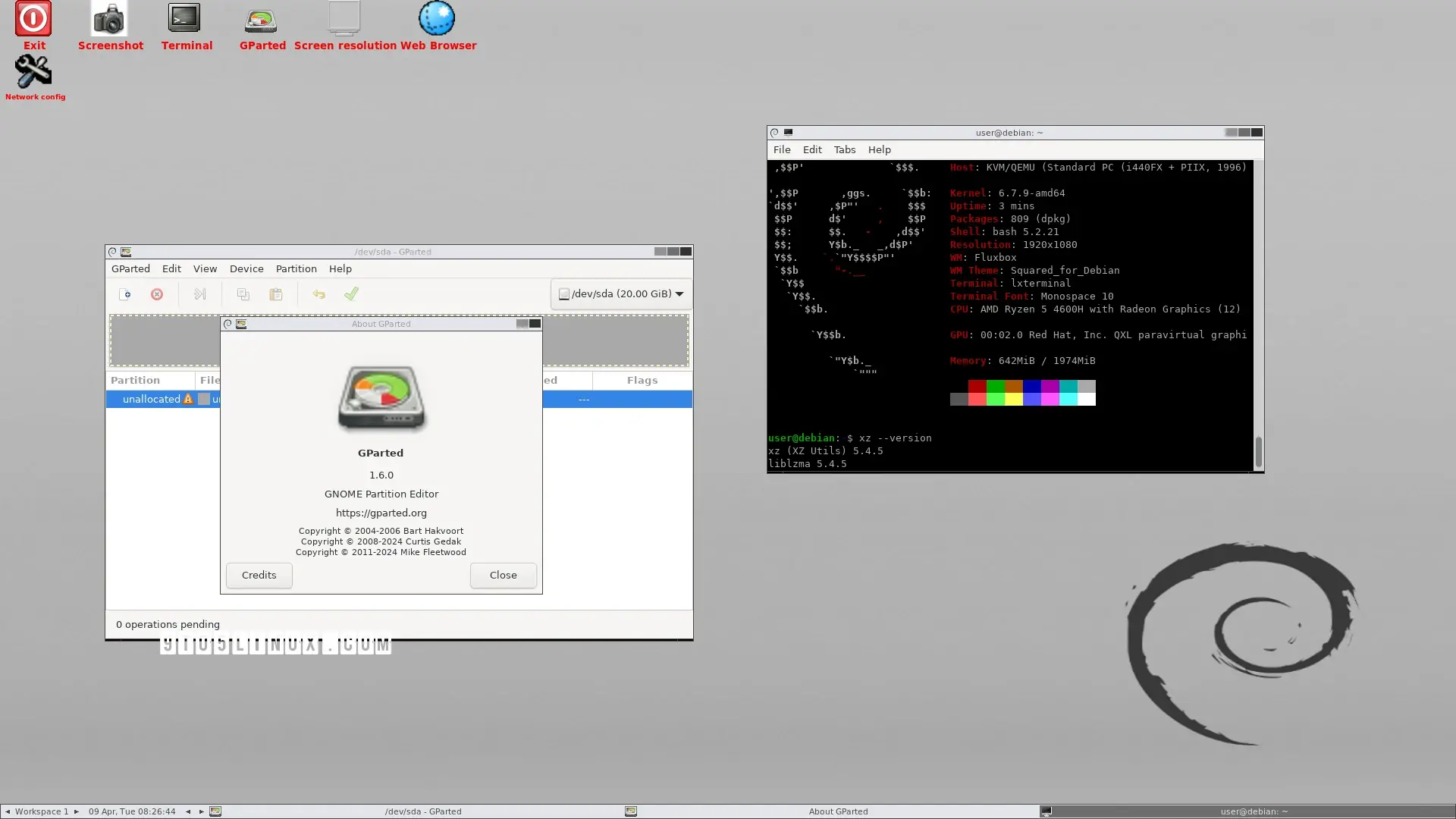

4·5 months agoWindows native games are shown…

10·5 months ago

10·5 months agoYes. They’re live, so feel free to ask them about it. They can explain it much better than I ever could.

4·5 months ago

4·5 months agoWe’re discussing Apple’s implementation of an OS level AI, it’s entirely relevant.

GrapheneOS has technical merit and is completely open source, infact many of the security improvements to Android/AOSP are from GrapheneOS.

I love Olan’s.

Who?

5·5 months ago

5·5 months agoYeah and apple is completely untrustworthy like any other corporation, my point exactly. Idk about you, but I’ll stick to what I can verify the security & privacy of for myself, e.g. Ollama, GrapheneOS, Linux, Coreboot, Libreboot/Canoeboot, etc.

4·5 months ago

4·5 months agoHowever, to process more sophisticated requests, Apple Intelligence needs to be able to enlist help from larger, more complex models in the cloud. For these cloud requests to live up to the security and privacy guarantees that our users expect from our devices, the traditional cloud service security model isn’t a viable starting point. Instead, we need to bring our industry-leading device security model, for the first time ever, to the cloud.

As stated above, Private cloud compute has nothing to do with the OS level AI itself. ರ_ರ That’s in the cloud not on device.

While we’re publishing the binary images of every production PCC build, to further aid research we will periodically also publish a subset of the security-critical PCC source code.

As stated here, it still has the same issue of not being 100% verifiable, they only publish a few code snippets they deam “security-critical”, it doesn’t allow us to verify the handling of user data.

- It’s difficult to provide runtime transparency for AI in the cloud.

Cloud AI services are opaque: providers do not typically specify details of the software stack they are using to run their services, and those details are often considered proprietary. Even if a cloud AI service relied only on open source software, which is inspectable by security researchers, there is no widely deployed way for a user device (or browser) to confirm that the service it’s connecting to is running an unmodified version of the software that it purports to run, or to detect that the software running on the service has changed.

Adding to what it says here, if the on device AI is compromised in anyway, be it from an attacker or Apple themselves then PCC is rendered irrelevant regardless if PCC were open source or not.

Additionally, I’ll raise the issue that this entire blog is nothing but just that a blog, nothing stated here is legally binding, so any claims of how they handled user data is irrelevant and can easily be dismissed as marketing.

- It’s difficult to provide runtime transparency for AI in the cloud.

2·5 months ago

2·5 months agoAI powered Rootkit.

61·5 months ago

61·5 months agoTheir keynotes are irrelevant, their official privacy policies and legal disclosures take precedence over marketing claims or statements made in keynotes or presentations. Apple’s privacy policy states that the company collects data necessary to provide and improve its products and services. The OS-level AI would fall under this category, allowing Apple to collect data processed by the AI for improving its functionality and models. Apple’s keynotes and marketing materials do not carry legal weight when it comes to their data practices. With the AI system operating at the OS level, it likely has access to a wide range of user data, including text inputs, conversations, and potentially other sensitive information.

5·5 months ago

5·5 months agoApple claimed that their privacy could be independently audited and verified.

How? The only way to truly be able to do that to a 100% verifiable degree is if it were open source, and I highly doubt Apple would do that, especially considering it’s OS level integration. At best, they’d probably only have a self-report mechanism which would also likely be proprietary and therefore not verifiable in itself.

61·5 months ago

61·5 months ago- Malicious actors could potentially exploit vulnerabilities in the AI system to gain unauthorized access or control over device functions and data, potentially leading to severe privacy breaches, unauthorized data access, or even the ability to inject malicious content or commands through the AI system.

- Privacy breaches are possible if the AI system is compromised, exposing user data, activities, and conversations processed by the AI.

- Integrating AI functionality deeply into the operating system increases the overall attack surface, providing more potential entry points for malicious actors to exploit vulnerabilities and gain unauthorized access or control.

- Human reviewers have access to annotate and process user conversations for improving the AI models. To effectively train and improve the AI models powering the OS-level integration, Apple would likely need to collect and process user data, such as text inputs, conversations, and interactions with the AI.

- Apple’s privacy policy states that the company collects data necessary to provide and improve its products and services. The OS-level AI would fall under this category, allowing Apple to collect data processed by the AI for improving its functionality and models.

- Despite privacy claims, Apple has a history of collecting various types of user data, including device usage, location, health data, and more, as outlined in their privacy policies.

- If Apple partners with third-party AI providers, there is a possibility of user data being shared or accessed by those entities, as permitted by Apple’s privacy policy.

- With the AI system operating at the OS level, it likely has access to a wide range of user data, including text inputs, conversations, and potentially other sensitive information. This raises privacy concerns about how this data is handled, stored, and potentially shared or accessed by the AI provider or other parties.

- Lack of transparency for users about when and how their data is being processed by the AI system & users not being fully informed about data collection related to the AI. Additionally, if the AI integration is controlled solely at the OS level, users may have limited control over enabling or disabling this functionality.

81·5 months ago

81·5 months agoYes, on Android. From my own investigations, it appears to have a really bad malware problem despite it’s claims of scanning for malware, especially for the free distributions of paid apps from the Google Play Store, which constitutes piracy and copyright infringement raising ethical issues.

91·5 months ago

91·5 months agoyou can use it in almost any app

if done rightHow are you going to be able to use it in “almost any app” in a way that is secure? How are you going to design it so that the apps don’t abuse the AI to get more information on the user out of it than intended? Seems pretty damn inherently insecure to me.

Nice.

Don’t forget to put [Solved] in your post title.